The confidence trap

At ITAaaS, one of our founding principles is: architecture first, products second. No vendor ties, no compromises. This independence is why our AI discovery service focuses on practical applications that actually work, not what products we

can sell.

The mainstream AI tools flooding the market project absolute confidence even when they're completely wrong. Research

from MIT shows these models can't distinguish between what they know and what they're fabricating. They'll tell you the wrong answer with the same certainty as the right one. For enterprise systems, especially those running critical operations, governance, accuracy and risk mitigation aren't optional extras.

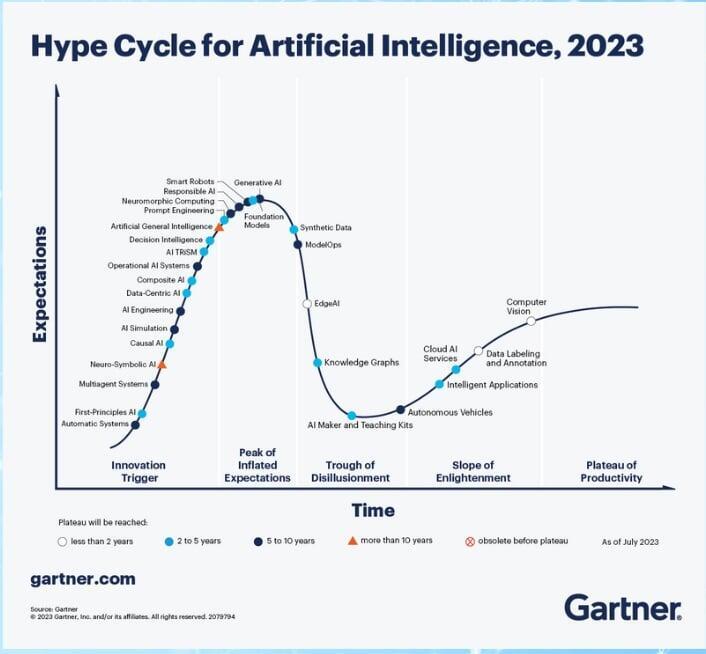

The Gartner AI Hype Cycle places Generative AI at peak inflated expectations. We've seen this movie before. The question isn't whether there'll be a correction—it's whether you'll be caught holding the bag when it happens.

Case in Point: The "Autonomous" Robot That Wasn't

Humanoid robots perfectly illustrate the hype-reality gap. Companies like 1X Technologies, Figure, and others are taking pre-orders right now, complete with slick marketing videos promising household help. The price tags? Between $16,000 and $30,000 for your own robot butler.

The reality? Those impressive demos were teleoperated by humans, not AI:

"Autonomous" robots were actually remote-controlled by operators, a fact buried in fine print or revealed by investigative journalists

Tesla's Optimus robot demos? Same story—humans behind the curtain, confirmed when event attendees noticed the suspiciously human-like responses

Real autonomy could still be years away (Tesla's Autopilot took over a decade to develop with far simpler, rule-based road environments versus the chaos of homes)

A potential privacy nightmare: Would you honestly agree to allow strangers to operate cameras inside your home? Research from Mozilla consistently flags connected home devices as privacy disasters

Watch these reality checks:

"The Problem with this Humanoid Robot" – breaks down the teleoperation deception

"I Tried the First Humanoid Home Robot. It Got Weird" – WSJ's hands-on test reveals the gaps

This isn't just about robots. It's a pattern across the AI industry. Stanford's AI Index shows the growing gap between AI capability claims and measured performance.

Why This Matters for Your Business

If consumer robots have hidden human operators, who's really behind your "autonomous" enterprise AI? The implications are staggering:

Hidden Human Dependencies: When Amazon's "Just Walk Out" technology was revealed to rely on 1,000+ human reviewers in India, it exposed a truth: many "AI" solutions are human-powered Mechanical Turks. Is your vendor's "proprietary AI" actually a call centre in disguise?

Data Privacy Catastrophes: Every human-in-the-loop is a potential data breach. The Uber hack of 2022 started with social engineering. If your "AI" has hidden human operators, your attack surface just exploded.

Compliance Nightmares: GDPR, Australia's Privacy Act, and upcoming AI regulations all require transparency about data processing. Hidden human operators could put you in breach before you've even launched.

For mission-critical systems ask the following questions:

What's the actual capability today (not the 18-month roadmap)?

How is accuracy validated? What's the error rate? Where's the model card?

Who has access to your data streams? Are there human-in-the-loop processes you haven't been told about?

What happens when it fails? What's your recovery plan?

Have you seen the architecture? Or just marketing slides?

Is this solving a real problem or just theatre?

The Architecture-First Alternative

Good AI implementation starts with architecture, not products. Here's what that looks like:

Define the Problem – Not "we need AI" but "we need to reduce document processing time by 60%"

Assess Current State – Document existing systems, data quality, integration points

Design for Failure – Because AI fails differently than traditional software

Build Governance First – Before the first model runs, know who's accountable when it goes wrong

Start Small, Scale Smart – Pilot with low-risk processes, expand based on proven ROI

This approach has saved our clients millions. One of our early clients was sold a $1M "AI transformation" with promised 12-month ROI. We helped them pivot to a $40K integrated automation pilot instead. Why? The million-dollar model was a disruptive transformation they couldn't realistically manage or grow. The pilot? A low-risk tool perfectly suited to their actual capabilities and long-term needs. They now have a tool they understand and can self-manage, AND they have saved $960K, without the transformation trauma.

Red Flags to Watch For

When evaluating AI vendors, these should trigger immediate scrutiny:

"Proprietary algorithm" with no technical documentation

Reluctance to discuss failure modes or error rates

No clear data lineage or audit trail

"Black box" explanations for enterprise decisions

Pricing based on "AI complexity" rather than business value

No option for on-premises deployment (where's your data really going?)

Vague answers about human oversight requirements

The Bottom Line

AI's future is here, and it's messy. The gap between marketing promise and operational reality can crater your ROI—or worse, your compliance status. McKinsey reports that 70% of companies see minimal or no impact from AI initiatives. Don't be part of that statistic.

At ITAaaS, we help enterprises separate solid applications from shiny toys. We don't sell products; we match technology to your actual needs and risk appetite across six focus areas:

Data Governance – Because garbage in still means garbage out, even with AI

Platform Assessment – Understanding what you have before adding complexity

AI Discovery/POV – Finding genuine use cases, not forcing square pegs

IoT/OT Assessment – Where physical meets digital, risks multiply

Cybersecurity – AI is an attack vector, not just a defence tool

Business Resiliency & Recovery – When AI fails, operations must continue

Our vendor independence means we can tell you the truth: sometimes the best AI strategy is to wait. Sometimes it's to move fast. Always, it's to move smart.

Ready for a grounded conversation about AI that won't leave you explaining to the board why your "robot butler" can't wash dishes—or why strangers were watching it try?

Contact us for an honest assessment of your AI readiness. No products to push. Just clear-eyed architecture advice that protects your investment and your reputation.